Doug Kerr

Well-known member

As you may know, I have lately been concerned with understanding the real objective of the use of a "hemispherical receptor" incident light exposure meter, as pioneered by Don Norwood.

Norwood tells us that its real point is to have the meter recognize "all the light falling on a subject that would result in reflected light toward the camera." But just what does that mean, photometrically?

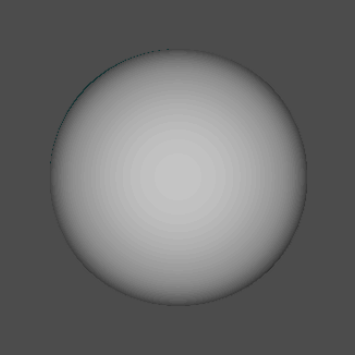

A major step forward for me was when I realized that the reading of a "hemispherical receptor" meter was just the average illuminance on the receptor (by area of the receptor).

In light of the fact that we consider the hemispherical receptor a proxy for the surface of our subject that can be seen from the camera, this then means that the meter reading gives us an approximation of the average illuminance on the camera-visible subject surface (by area of that surface).

But why should that be our best single determinant of the desirable photographic exposure? It is not even, for subject elements of some given reflectance, the average luminance they have as seen from the camera. (This has to to with the projected area toward the camera of elements of different orientations.)

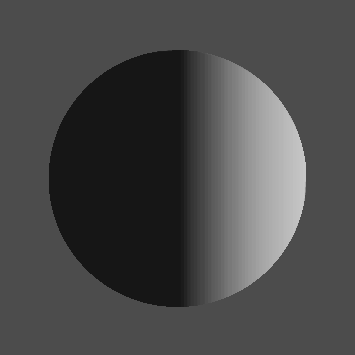

But now I think as follows. We are not really interested in the average illuminance on the visible subject surface. We really would like to know the midpoint of the range of illuminance on that surface.

That way, for subject elements of any given reflectance, the range of luminance toward the camera (and thus the range of photometric exposure on the sensor) would be symmetrically disposed about the ideal value - probably our best bet.

But we cannot with any simple "one-shot" meter measurement determine the midpoint of the illuminance.

But the photometric geometry is such that the midpoint does not depart much from the average.

Thus by measuring the average illuminance (which the hemispherical receptor meter does for us handily) we get a value that is highly useful in determining the desirable photographic exposure.

Best regards,

Doug

Norwood tells us that its real point is to have the meter recognize "all the light falling on a subject that would result in reflected light toward the camera." But just what does that mean, photometrically?

A major step forward for me was when I realized that the reading of a "hemispherical receptor" meter was just the average illuminance on the receptor (by area of the receptor).

In light of the fact that we consider the hemispherical receptor a proxy for the surface of our subject that can be seen from the camera, this then means that the meter reading gives us an approximation of the average illuminance on the camera-visible subject surface (by area of that surface).

But why should that be our best single determinant of the desirable photographic exposure? It is not even, for subject elements of some given reflectance, the average luminance they have as seen from the camera. (This has to to with the projected area toward the camera of elements of different orientations.)

But now I think as follows. We are not really interested in the average illuminance on the visible subject surface. We really would like to know the midpoint of the range of illuminance on that surface.

That way, for subject elements of any given reflectance, the range of luminance toward the camera (and thus the range of photometric exposure on the sensor) would be symmetrically disposed about the ideal value - probably our best bet.

But we cannot with any simple "one-shot" meter measurement determine the midpoint of the illuminance.

But the photometric geometry is such that the midpoint does not depart much from the average.

Thus by measuring the average illuminance (which the hemispherical receptor meter does for us handily) we get a value that is highly useful in determining the desirable photographic exposure.

Best regards,

Doug